Code Metrics – Feeling the need for a new software metric

This is an article, written in July of 2004 by chief architect of IDE tools – Mark Miller. The article has been posted on Mark’s original blog, which existed before his new one. But later, the original blog and article were lost. I am reposting this article here, so everyone can learn something new about code metrics existing in CodeRush and Refactor! products. Posted with his permission.

Mark Miller

Saturday, July 03, 2004Feelin’ the need, the need for a new software metric

I’m getting the strong feeling that available software metrics out there are pretty weak at quickly calculating the complexity, and hence, the cost of maintenance, of the software we create.

It’s About the Cost of Maintenance

I used to think that the more lines of code you had, the better; it was a measure of sophistication, complexity, and skill. How I admired those code jockeys who could claim code bases of a million lines or more.

These days when someone talks about their million line code base with pride — I let them know it’s quite likely got a serious maintenance problem. The fastest way to a million lines is through the clipboard. Copied code is like cancer for software.

Simpler methods and accessors facilitate reuse by making the bits and pieces you need more accessible. Granted, reuse through smaller simpler methods requires a little more initial investment than using the clipboard, but it does come without the long-term damage of the latter, and smaller code blocks are easier to understand & maintain. Smaller methods also lower the risk of introducing a defect during maintenance.

So to get our code in shape, we need to identify the parts that are overweight. That’s where software metrics come in.

Existing Metrics

Well we have loads of metrics already, including:

- Cyclomatic complexity. Fun to say at parties. Easy to calculate. Probably the simplest measure of complexity. CC is the number of decision points for a method plus one. It also happens to represent the minimum number of test cases needed to travel through all branches of a method.

- Fan-out. Number of function calls plus property references inside a method.

- Fan-in. Number of calls to a property or method from the outside world.

- Halstead Metrics. Count operators and operands, among others.

- And more. Line counts, statement counts, comment counts, white space counts, etc.

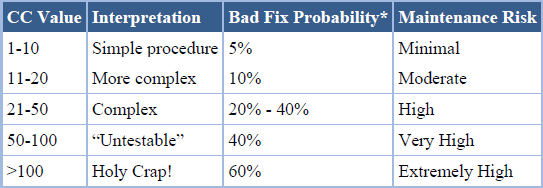

All of these metrics come with recommended thresholds. If software exceeds these thresholds, it may be a sign that code is more complex than it needs to be. For example, most discussions of cyclomatic complexity usually include a table similar to the following:

*Bad Fix Probability represents the odds of introducing an error while maintaining code.

But it’s not just about counting lines, operators, comments, white space, or decision points. Some constructs are simply harder to maintain than others. For example, decision points can be used to reduce long term maintenance risks (e.g., see Fowler’s Replace Nested Conditional with Guard Clause). This refactoring preserves the logic of the method while reducing nested decision points. The decision point count remains static, which means the CC value for both methods will be the same, however one is cheaper to maintain than the other.

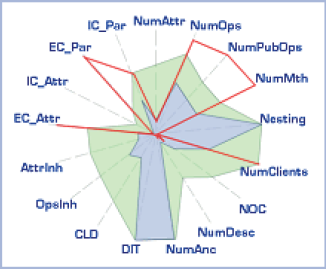

One of the very telling indications that current metrics are not up to snuff is the relatively common use of Kiviat diagrams to display metric threshold adherence and violations.

If you’re not familiar with Kiviat diagram (consider yourself lucky), they are a neat way of combining voodoo magic with data (alright, that’s my opinion), and have a tendency to produce results that seem to infer meaning which simply isn’t there.

Kiviat diagrams position metrics of interest around a center point. The range of possible values for each metric is represented by a line running from the center of the diagram (the min value for that metric, usually zero) to the outside edge.

In the example above, the green shape represents acceptable threshold values for each metric. The blue region represents averages for the entire project, and the red region represents a cross section of the project (e.g., a class, method, etc.).

The problem with Kiviat diagrams is that equal visual weight is applied to unequal metrics (for example, you really can’t equate excessive cyclomatic complexity with excessive commenting). Additionally, Kiviat diagrams combine a series of one dimensional data to form a two-dimensional shape. Further obscuring the results is the frequent attempt to divine meaning by calculating the area of the shape.

Depending on the metrics you’ve selected to chart, their positions on the Kiviat chart, and the relative severity of one metric violation to that of its neighbors, you can get wildly-varying shapes for the exact same data. Calculated area will be large when excessively violated metrics happen to be next to each other in the diagram, but the area will be close to zero if excessive violations are separated by a metric with a very low value.

So I’m in the mood for a new metric. Something simple like CC, but smarter. For example, decision points should be 1 point like CC, but guard clauses at the top of a method should only be 0.5 points. And as the nested level of a conditional increases, so should the points assigned (e.g., add an extra point for each level nested). Similar point scaling should be applied to nested and compound expressions. And the metric should take everything into consideration: parameter counts, operators, visibility, etc., etc., etc., so we end up with a single number that represents the long-term maintenance risk of a method or property.

Of course, I still want to be able to drill down into that number. Maybe I could create a 3D half-sphere semi-rotating Super Kiviat diagram using Direct X….

If this mood holds much longer, I may just act on it.

—– Products: CodeRush Pro Versions: 10.1 and up VS IDEs: any Updated: Jan/21/2010 ID: T035